See the NIST Statistics Handbook page 6.5.3.2. The covariance matrix determinant can be interpreted as generalized variance.

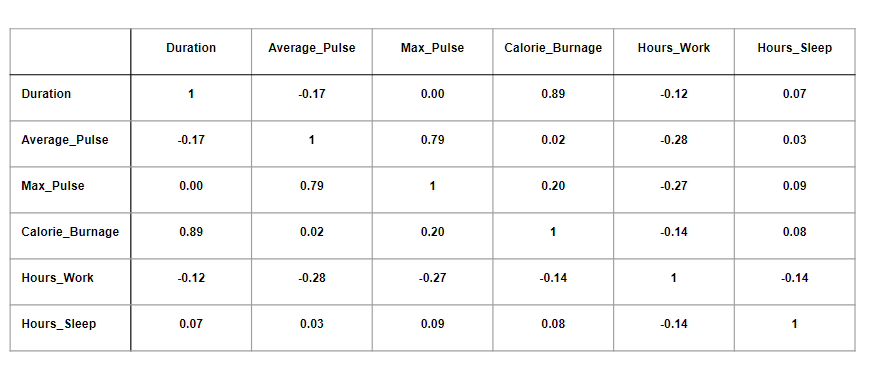

Alexander Vigodner's answer in the same thread says it also possesses the property of positivity.See tmp's answer at What does Determinant of Covariance Matrix give? for details. In the case of a Gaussian distribution, the determinant indirectly measures differential entropy, which can be construed as dispersion of the data points across the volume of the matrix.Since determinants represent signed volumes, I expected those pertaining to these four types of matrices would translate into multidimensional association measures of some sort this turned out to be true to some extent, but a few of them exhibit interesting properties: I was able to cobble together some general principles, use cases and properties of these matrices from a desultory set of sources few of them address these topics directly, with most merely mentioned in passing. It's cheaper to just calculate the covariance and correlation in a one-on-one, bivariate manner in these particular languages, but I'll go the extra mile and implement determinant calculations if I can derive some deeper insights that justify the expense in terms of programming resources. My question is not pressing, but in the long run I will have to make decisions on whether or not it's worthwhile to regularly include these matrices and their determinants in my exploratory data mining processes. I'm not familiar enough with the topic or the heavy matrix math involved to speculate further, but I am capable of coding all four types of matrices and their determinants. That also begs the question of what kind of volume their inverses would represent. over an entire set, or something to that effect (as opposed to ordinary covariance and correlation, which are between two attributes or variables). Is there a practical reason why they're not discussed often in the literature on data mining? More importantly, do they provide any useful information in a stand-alone fashion and if so, how could I interpret the determinants of each? I realize that determinants are a type of signed volume induced by a linear transformation, so I suspect that the determinants of these particular determinants might signify some kind of volumetric measure of covariance or correlation etc. Most of the mentions I have encountered revolve around using the determinants as a single step in the process of calculating other statistical tests and algorithms, such as Principle Components Analysis (PCA) and one of Hotelling’s tests none directly addresses how to interpret these determinants, on their own. Since determinants are frequently calculated for other types of matrices, I expected to find a slew of information on them, but I've turned up very little in casual searches of both the StackExchange forums and the rest of the Internet. One thing I have yet to see directly addressed in the literature, however, is how to interpret the determinants of these matrices. One obvious example is the presence of variances on the diagonals of covariance matrices some less obvious examples that I have yet to use, but could come in handy at some point, are the variance inflation factors in inverse correlation matrices and partial correlations in inverse covariance matrices. While learning to calculate covariance and correlation matrices and their inverses in VB and T-SQL a few years ago, I learned that the various entries have interesting properties that can make them useful in the right data mining scenarios.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed